Skills

Over the years I've built up a wide coding skillset,

mainly shaped by my studies in AI and

game design.

I've now specialized in Python, building various applications and models. I also have experience with other languages and tools.

Projects

IMDb category generalization

Predicting movie themes based on their metadata. Generalizing categories for better classification.

Workflow

1. Data Collection: I gathered a dataset of movies from IMDb, including metadata such as genre, plot summary, cast, and user ratings.

2. Data Preprocessing: I cleaned the data by handling missing values, normalizing text fields, and encoding categorical variables.

3. Feature Engineering: I created new features based on the existing metadata, such as the number of genres, average user rating, and presence of certain keywords in the plot summary.

4. Model Training: I trained various models using Scikit-learn to predict movie themes based on chosen features.

5. Evaluation: I evaluated the model's performance using metrics such as accuracy, precision, recall, and F1-score.

Tech Stack

Python: Used for data manipulation, feature engineering, and model training. Used various libraries such as Pandas, NumPy, and Matplotlib.

Scikit-learn: Mainly used sk-learn for building and evaluating machine learning models.

NLTK: Used for analyzing plot summaries, mainly to look for sentiment as well as keywords. Also used to combine themes that fit together.

Results

The testing of the models showed how difficult it actually was to predict movie themes accurately. The best performing model, which was XGBoost, achieved an F1-score of 0.55, which indicates that while the model was able to capture some patterns in the data, there is still room for improvement. The generalization of categories did help to improve the model's performance, as it allowed us to capture more nuanced relationships between the features and the themes. It increased the F1-score from 0.35 to 0.55, which is a significant improvement.

TV Show Script Generation

Generating TV show scripts using natural language processing. Using parsing methods to create unique dialogue.

Workflow

1. Data Collection: We used web scraping to create a dataset of existing TV show scripts.

2. Data Preprocessing: I cleaned the data by by removing punctuation, filtering on what lines are from which persona, and creating a structured format that would be easy to parse.

3. Parsing: I used NLTK to parse the sentences in the scripts, looking at the structure of the sentences and how they were built (classifying word types, and probabilities of word appearances). I then created a model that could generate new sentences based on this structure.

Tech Stack

Python: Used for data manipulation as well as scraping of the scripts.

NLTK: Used for parsing and analyzing the text data. Calculating probabilities of word appearances based on the dataset. Classified each word based on its role in the sentence.

Results

The results of the project showed that while the generated scripts were not perfect, they were able to produce decent sentences. The model was able to capture character-specific language and sentence structure. However, there were still some issues with coherence and consistency in the generated scripts, which is an area for future improvement. For future work, I would like to explore more advanced language models, such as transformer-based models, to improve the quality of the generated scripts.

Diabetes Data Analysis

Studying the effects of income class on diabetes. Full data analysis on income class, country wealth, as well as other factors.

Workflow

1. Data Collection: I gathered several datasets containing information about diabetes patients and their socioeconomic backgrounds.

2. Data Preprocessing: I cleaned the data by handling missing values and normalizing numerical features.

3. Exploratory Data Analysis: I visualized the data to understand its distribution and identify any patterns or outliers.

4. Statistical Testing: I did various statistical tests to determine the significance of the relationships between variables.

Tech Stack

Python: Used for data manipulation. Used various libraries such as Pandas, Plotly, Seaborn, and more.

Jupyter Notebook: Used for experimentation and visualization of the data analysis.

Analysis Results

The analysis revealed a big correlation between income class and diabetes prevalence, with lower-income groups showing higher rates of diabetes. Additionally, country wealth was also found to be a contributing factor, with wealthier countries generally having better healthcare access and lower diabetes rates. Other factors such as age and BMI were also found to have high impact on diabetes risk.

Discord Producer Tags

A Discord bot that gives producer tags and plays them whenever you join a voice channel.

Workflow

1. Bot Creation: I created a Discord bot using the Discord.py library and set up the necessary permissions in the discord developer portal.

2. Tag Management: I implemented a bot function for users to set their unique producer tags, which are stored in a json file.

3. Determining Voice Channel: I added functionality to set the voice channel where the bot will play the producer tags.

Tech Stack

Python: Used for creating the Discord bot and handling the backend logic.

Discord.py: Used for creating the Discord bot.

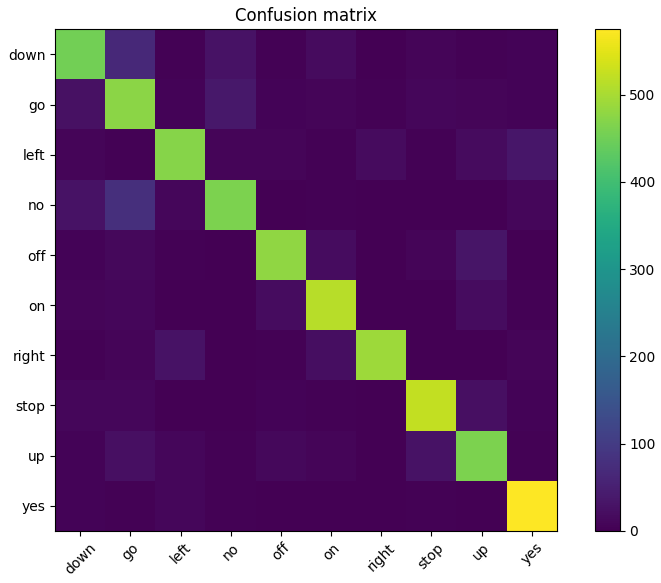

Deepspeech Keyword Spotting

Creating a speech recognition model that can identify keywords based on their MFCC's

Workflow

1. Dataset Download: I downloaded and organized the Google Speech Commands v0.02 dataset.

2. Feature Extraction: I made an MFCC extraction pipeline to transform raw audio into compact spectral features with reduced noise suitable for machine learning.

3. Dataset Preparation: I split the data into train, validation, and test splits to feed the model.

4. Model Architecture: I built a Convolutional Neural Network (CNN) specifically designed to classify the MFCC-based feature maps.

6. Training & Evaluation: Finally I trained and evaluated performance using accuracy and a confusion matrix to find the model strengths and weaknesses.

Tech Stack

Python: Used for basic scripting, and importing Machine Learning and data manipulation/visualization modules.

Pytorch: Used to create and train the model.

Scikit-learn: Used for the confusion matrix visualization, as well as the classification-report.

Jupyter Notebook: Used for a nice workflow as well as visualization of the data and results.

Results

The CNN achieved a validation accuracy of ~84% after 10 epochs, with a training accuracy of 90%, indicating a reasonable fit without severe overfitting. The precision and recall scores, which both average at 0.85 respectively, suggest that the model performs consistently across most keywords, though certain classes (such as “go” and “down”) show lower recall.

The confusion matrix reveals that most misclassifications occur between acoustically similar words (e.g., "no", "go" and "down", or "up" and "off"), which is expected in short speech segments.

CV Website (this website)

A website that displays my skills, experience, and some of my career projects.

Website Workflow

1. UI/UX Design: I created a modern looking interface with a focus on smooth scrolling and a responsive layout.

2. Frontend Architecture: I built the core structure using HTML.

3. Interactivity & Animations: I used the GSAP library (GreenSock Animation Platform) to handle scroll-triggered animations between sections.

4. Scripts: I wrote JavaScript logic to manage state, scroll calculations, form submissions, and much more.

Tech Stack

HTML: Used for creating the base layout.

CSS: Used to further improve the layout as well as do styling for each element.

JavaScript: Used for the logic behind transitions, scrolling logic, a navigation bar in the header, a dynamic sliding bar for the projects section, the sending of emails through the website, and a lot of button logic.